The red, green and blue world

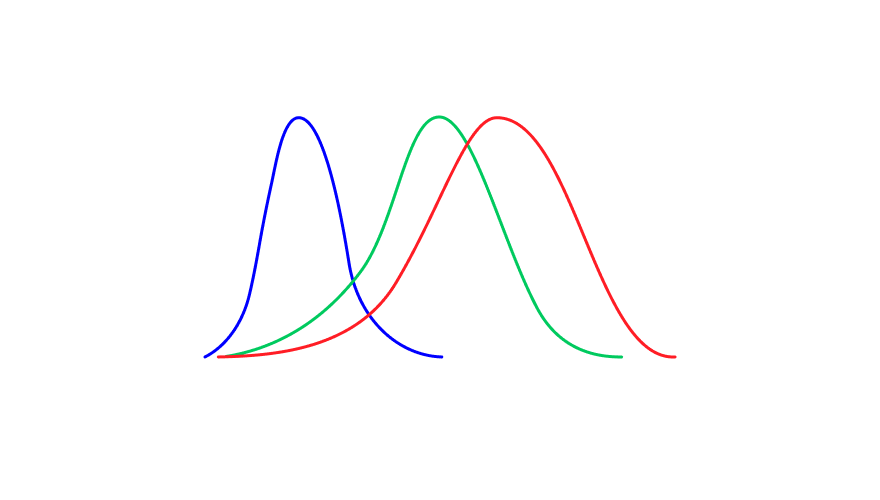

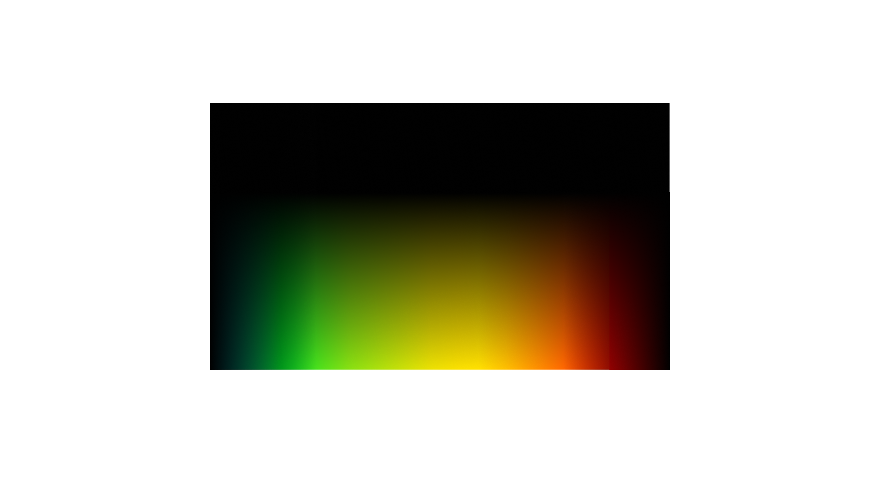

While it feels like we can see a vast spectrum of colours, again think of the rainbow and the different shades of each colour blending into one another. In reality, our eyes only contain three types of colour receptors. These receptors, or cones, are sensitive to the different parts of the spectrum corresponding to red, green, and blue light.

This means that every colour you’ve ever seen is, in fact, the result of your brain balancing the relative red, green, and blue light detected by the receptors in your eyes.

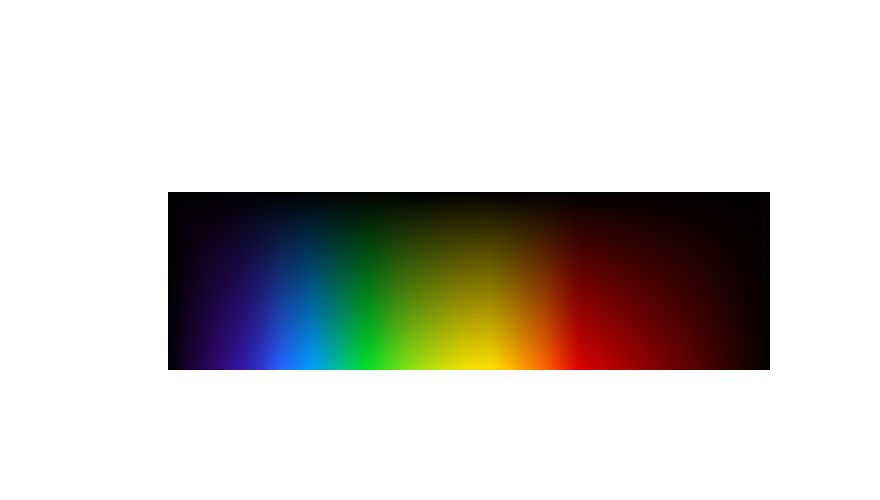

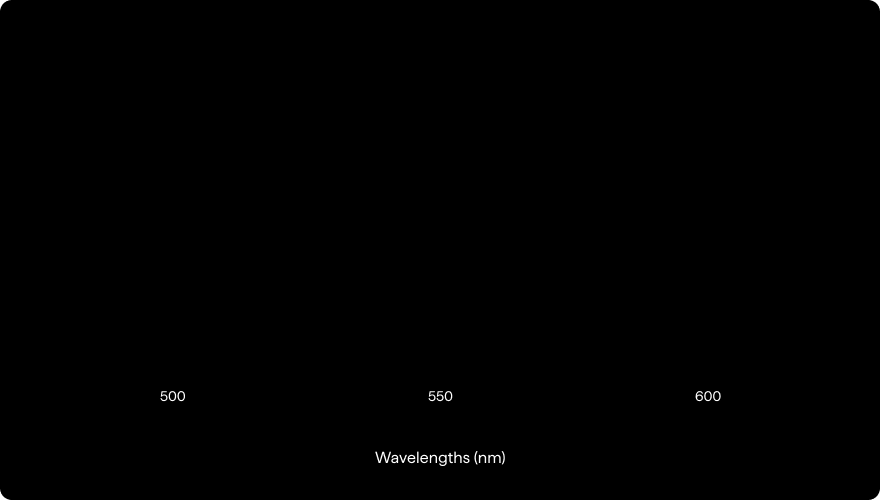

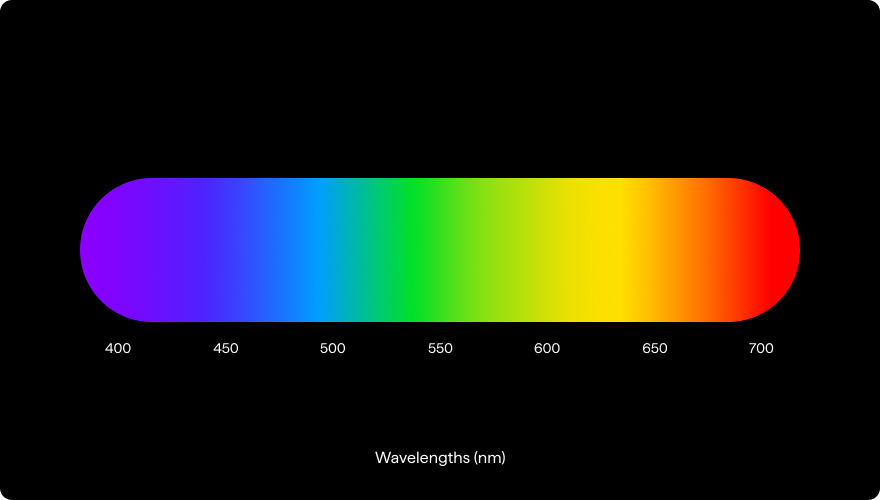

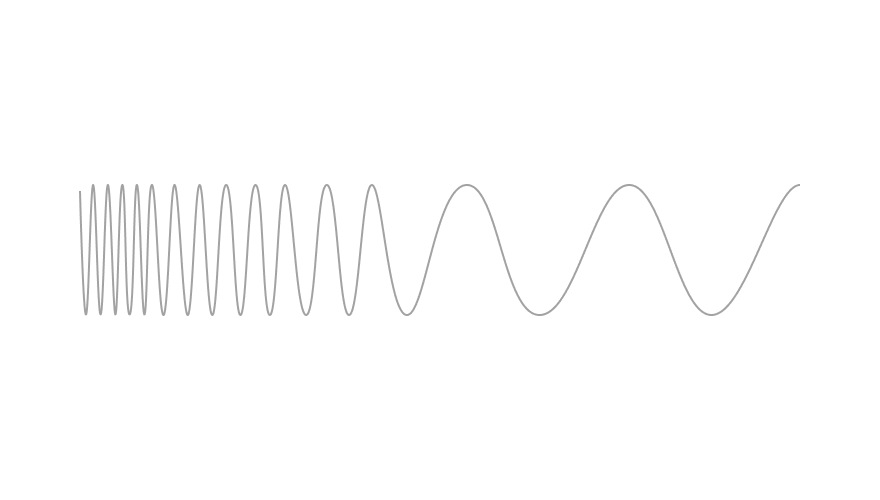

The colour of light is defined by its wavelength (measured in nanometers, nm). These three colours cover the majority of the visible spectrum (400nm – 700nm), with red a longer wavelength (635nm), blue a shorter wavelength (445nm), and green in the middle (535nm).

So how do we perceive other colours?

Let’s take yellow, for example. Yellow corresponds to roughly 570-580nm, in between red and green. Therefore, yellow light entering the eye will excite both red and green receptors, and the brain splits the difference perceiving the colour yellow.

If the light excites the red receptors slightly more than the green receptors, the brain will react accordingly, perceiving a colour closer to orange. If the light is closer to a greenish yellow (a colour sometimes called chartreuse), it shifts the other way, exciting the green receptors slightly more than the red receptors and again our brain corrects the colour we see.

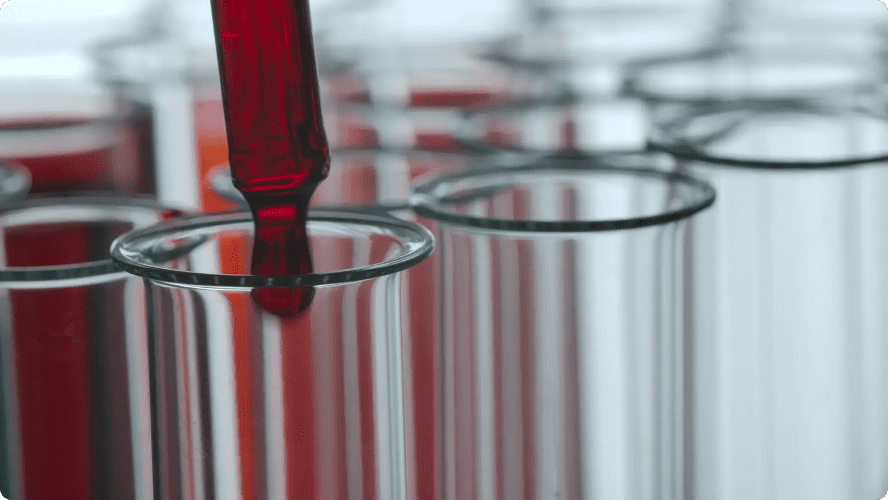

This Red, Green, and Blue (RGB) world we perceive is recreated by traditional photography. Your standard digital camera contains a sensor with filters that capture and combine red, green, and blue light to generate images that match the world we are used to seeing.

This is also how most screens work. The tiny dots (pixels) making up your computer screen output red, green, and blue light, combining or eliminating each to produce a vast array of colours. While modern screens are too high resolution to see these pixels, the red, green, and blue world can be revealed if you zoom in enough.

The world beyond visible light

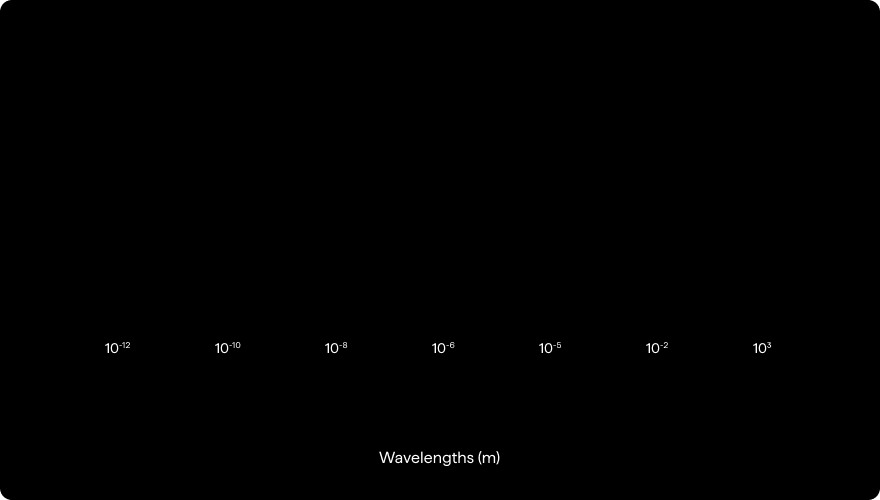

As you may know, the visible spectrum of light (light that can react with our eye’s receptors) is only a small part of all the light that is floating out there in the world. Humans can only see around 0.01% of the entire spectrum of light. Almost all the light the universe is capable of producing is invisible to us.

While our eyes can’t see light beyond the visible spectrum (wavelengths shorter than 400nm or longer than 700nm), using special equipment and sensors, it is possible to detect much more.

The full spectrum includes common phrases you are likely familiar with but may not know they were referring to light. From high energy x-rays used to image our bones and ultra-violet (UV) light that reacts with our skin resulting in a suntan to the infrared radiation we sense as heat, the microwaves used to heat food quickly, and the radio waves sending music and news around the globe, all of these phrases refer to parts of the spectrum.

RGB Imaging vs. Hyperspectral Imaging

As we mentioned before, RGB sensors used in standard cameras are typically designed to recreate the world we already perceive, i.e., they are limited to only three colours (or bands) of light. This is great for photography and video, but it wastes much of the information light can offer.

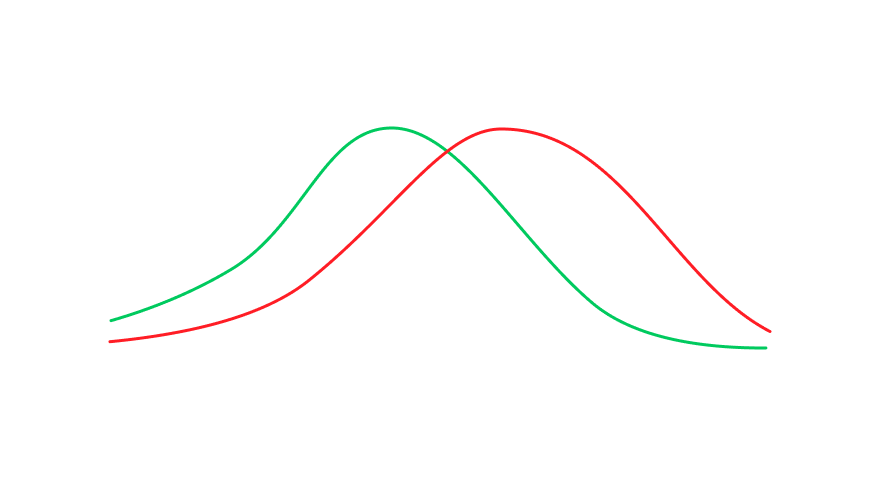

For example, RGB sensors can’t detect light beyond the visible spectrum or investigate spectral information hidden within an object’s reflected light. By spectral information, we mean obtaining a greater number of data points and learning the relative abundance of more than just red, green, and blue light – seeing the reflected light of an object in far greater detail.

An RGB sensor only has three values per pixel. HSI stretches what it is possible to see by detecting many more bands of light, offering 30 or more data points per pixel and expanding beyond the visible spectrum.

We changed from black and white photography to colour (RGB) in order to better reflect what our eyes can see. HSI takes imaging a step further, moving beyond recreating what our eyes can see to capturing more of the information the world around us provides.

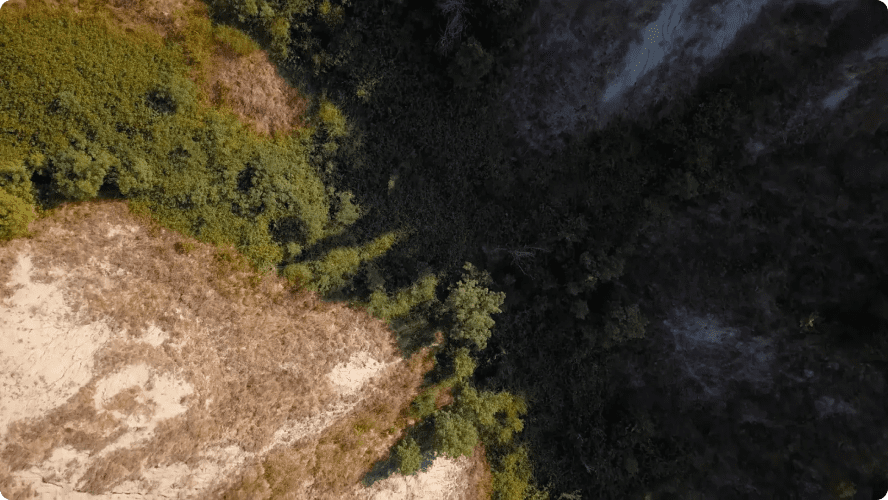

By generating more spectral information (more bands or “colours”), we can image in far greater detail and learn much more about the subjects of an image. RGB cameras are limited to just the colour (based on three data points) and shape of an object. HSI provides information that leads to a number of other properties, including the temperature of an object or the material it is made of.

The use cases of Hyperspectral Imaging

- Understanding the health of a plant or a crop

- Advanced computer vision applications

- A non-invasive tool with diagnostic and surgical applications in healthcare

Living Optics’

Hyperspectral Imaging Technology

At Living Optics, we are dedicated to a future where hyperspectral imaging is not just a tool for the few but an asset for the many. Currently, hyperspectral cameras are expensive, complex, and limited to specific industrial use cases. We’re changing that. We’re developing hyperspectral imagers that are not only more affordable but also easier to use, breaking down the technical barriers that have kept this technology out of reach for many.

Our vision is to seamlessly integrate hyperspectral imaging into the fabric of everyday life, a commitment that is reflected in our name, Living Optics – because we want to be an integral part of how people see and interact with the world around them.